Red Pajama 2: The Public Dataset With a Whopping 30 Trillion Tokens

4.6 (737) · $ 7.99 · In stock

Together, the developer, claims it is the largest public dataset specifically for language model pre-training

Leaderboard: OpenAI's GPT-4 Has Lowest Hallucination Rate

Language models recent news, page 7 of 25

Product & Engineering Archives - Pear VC

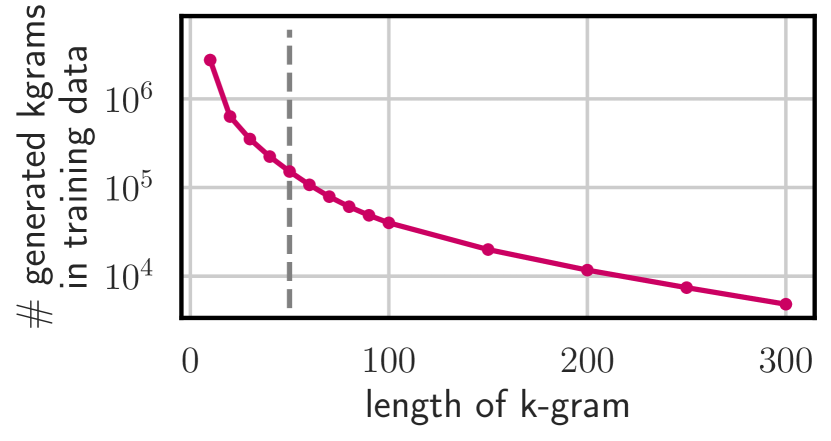

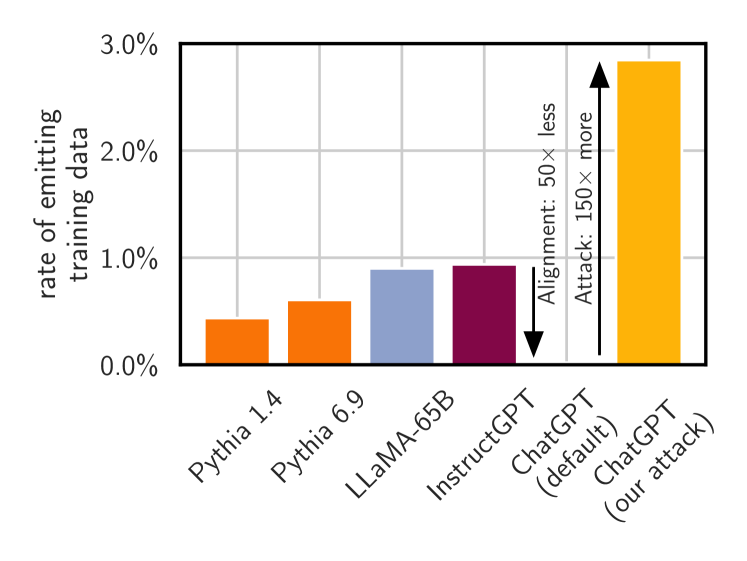

2311.17035] Scalable Extraction of Training Data from (Production) Language Models

Denys Linkov on LinkedIn: Together.ai releases a new LLM dataset called Red Pajama two, which is 30x…

GitHub - togethercomputer/RedPajama-Data: The RedPajama-Data repository contains code for preparing large datasets for training large language models.

Integrated AI: The sky is comforting (2023 AI retrospective) – Dr Alan D. Thompson – Life Architect

RedPajama-Data-v2: an Open Dataset with 30 Trillion Tokens for Training Large Language Models : r/LocalLLaMA

Top 10 List of Large Language Models in Open-Source

Together AI Releases RedPajama v2: An Open Dataset with 30 Trillion Tokens for Training Large Language Models - MarkTechPost

Releasing 3B and 7B RedPajama-INCITE family of models including base, instruction-tuned & chat models

2311.17035] Scalable Extraction of Training Data from (Production) Language Models

.jpg?width=700&auto=webp&quality=80&disable=upscale)

Data management recent news

Red Pajama 2: The Public Dataset With a Whopping 30 Trillion Tokens

2311.17035] Scalable Extraction of Training Data from (Production) Language Models